Apache Spark

Apache Cassandra

MongoDB

These are not unknown names in the tech industry. Each one of them has earned a commendable space in the field of distributed computing --Apache Spark as a unified analytics parallel processing framework and Apache Cassandra and MongoDB as leaders in NoSQL databases. While each of them offer great benefits -- like in-memory massive parallel processing, faster read responses, and flexible schema design -- when it comes to online transactional processing (OLTP), using them together in an application requires some tactical maneuvering.

This blog will focus on tips for running Apache Spark applications on NoSQL (Apache Cassandra and MongoDB) backends. These tips are based on issues my team came across while building a cloud-native platform to process customer credit card transactions. Building and managing distributed applications on a cloud-native environment brings its own challenges. Anyone who is into distributed systems will agree TCP/IP is the life blood of distributed systems, so let’s visit an imaginary place I like to call TCP/IP sPark. I hope your time in TCP/IP sPARK helps you overcome some of those challenges...

Welcome to TCP/IP sPark

Who doesn't like going to theme parks? They are fun and memorable so in this blog we are going to TCP/IP sPark, which has lots of interesting rides in its famous CassandraLand and MongoLand sections. If you are like me -- someone who enjoys theme park rides and building/managing distributed applications -- follow along.

We will start our journey by going to CassandraLand and learning a few lessons/tips for better ridership in TCP/IP sPark. Then we will go to MongoLand and pick up a few more. I hope you will find this journey interesting and joyous!

To properly enjoy your visit and take more memories home, some refreshers on Apache Spark, Mongo and Cassandra may help.

CassandraLand - Two tips for using Cassandra with Spark

The signature ride here in CassandraLand is the Token Ring Ferris Wheel. Riders go around and around and each time the wheel reaches the ground, their entry is registered in CassandraLand like so:

Cassandra Lesson 1 - Cassandra key sequence matters

Oh! Season pass holder DOE has gone missing after riding the Token Ring Ferris Wheel and park security is trying to find his whereabouts within CassandraLand.

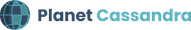

While querying for DOE’s details like above, our Spark application became unhappy and started spinning its own wheel. Ferris wheels > loading icons.

Why did this happen? The lesson here is that the Cassandra key sequence matters while querying. By the inherent nature of how Cassandra partitions the data in disk, if key sequence is not followed, it will not be able to fetch the data quickly from its partitions. Instead it will be doing table scans for each query, straining the Cassandra database cluster.

But no worries! FooBar has identified this issue and fixes it like below:

It seems that DOE had gone to the gift shop to buy TCP/IP sPark souvenirs. It’s always important to get souvenirs to remember your park visit, just like it’s important to mind your Cassandra key sequences.

Cassandra Lesson 2 - Use case-based data modeling

Another popular CassandraLand ride is the Partitioner Roller Coaster. If you select the correct seat(partition key), you will get back to the base station in exhilarating milliseconds. While customers are enjoying their roller coaster ride, their information is persisted like below so the ride operators can track who has ridden it each day:

This is important because like most roller coasters, the Partitioner Roller Coaster provides lockers for keeping your things in while you’re on the ride. But some customers might like to keep their things close by, or are in a hurry to board the ride and forget to use the lockers. If you’re one of these people, it’s easy for things to fall out of your pockets, or to be so excited to move on to another ride that you forget your things.

So whenever the roller coaster operator finds something under the coaster or in one of the seats there arises a need to find all the riders processed that day.

While it is possible to find the information in the Customer Schema, it is not the optimal way to do so. In Cassandra this is considered something of an anti-pattern. Cassandra Data is partitioned and its use case defines the schema.

The lesson here is to design your schema based on the use case in the first place, and in case it’s needed, the data producer has to duplicate the data as usage evolves.

MongoLand - Two Tips for using MongoDB with Spark

After gathering some lessons and tips from CassandraLand, our park visitors are heading to the much awaited MongoLand.

While customers are going round and round on the Schemaless Carousel, their information is persisted in the backend like so:

Mongo Lesson 1 - Manage MongoDB connections properly

From our visit to CassandraLand, we know DOE is a season pass holder to TCP/IP sPark. They have been bumped up into a higher membership tier and we need to update this in the system. But in order to process this information update, the carousel has to stop, and the other riders are unhappy that their ride is being slowed down and interrupted.

The lesson here is that Mongo Connections should be handled at JVM or partition level like below:

If connections are not handled at the partition or JVM level, there is the possibility that your application may open lots of unwanted connections depending on where you do it. This has the potential to bring down your application and database cluster, as well making the other carousel riders unhappy.

Mongo Lesson 2 - Indexes are very helpful

After recovering from the Schemaless Carousel debacle, we still haven't processed DOE’s membership update.

While we attempt to do so, we get the spinning ferris wheel -- or in this case, the carousel --- again. Just like in our visit to CassandraLand.

The lesson here is that Mongo Indexes are very important and helpful in cases where you need to find and update information such as DOE’s new park membership status.

Hope your visit to TCP/IP sPark has been enjoyable!

Hope your journey to TCP/IP SPark, CassandraLand, and MongoLand were beneficial and memorable. Remember that when working with Cassandra and Spark you should ensure your key sequence is correct and schema is planned as per your use case. And don’t forget that with MongoDB and Spark you should manage your connection properly, and indexes in particular, in case of specific updates.

See you another time in the magic land of TCP/IP sPark!