Architect’s Guide to Using NoSQL for Real-time AI: Part 1

How Apache Cassandra® is used in modern AI applications

This is the first article in a series of articles meant to be a guide for enterprise architects on how to use NoSQL databases, specifically Cassandra, in real-time AI-powered applications. The series will cover the principles of real-time AI, workflows, and how to leverage Cassandra to achieve the performance outcomes desired by the end user. Many experienced Cassandra users are already familiar with the topic at hand. By studying their approach to building AI systems that harness Cassandra’s unique scalability and performance capabilities, you can gain valuable insights.

Real-time AI is just one crucial aspect of the entire field of AI. Because so much has been written about AI already, we’ve provided a minimal set of recommended content for enterprise architects to review. These suggested resources are not comprehensive enough to transform you into a full-time AI practitioner. Most of the content we selected is from Google Cloud, and represents the kind of resources that Google’s own engineers and enterprise architects use. We also suggest you look at some refresher content on general NoSQL topics, as we will give tips on implementing NoSQL through the document.

Suggested pre-reading:

- Introduction to Machine Learning

- GCP MLOps White Paper

- Pay specific attention to the following deep-dive topics:

- Prediction Serving

- Continuous Monitoring

- Dataset and Feature Management

- Pay specific attention to the following deep-dive topics:

- Architect’s Guide of NoSQL

Recommended courses and reading

- ML Crash Course

- Andrew Ng’s MLOps Course Videos

- Google AI Adoption Framework (Business Focus)

- Guide to high-quality ML solutions

Real-time AI is a technology that makes predictions and decisions based on the most recent real-time data or user events available, enabling immediate responses to changing conditions. Traditionally, training models and inferences (predictions) based on models have been done in batch – typically overnight or periodically through the day. Today, modern machine learning systems perform inferences on the most recent data in order to provide the most accurate prediction possible. A small set of companies like TikTok and Google have pushed the real-time paradigm further by including on-the-fly training of models as new data comes in.

Enterprise architects should consider the push to real-time AI as just one component of Data-centric AI. Data-centric AI is based on the idea that improving the data quality, rather than the quality of the models, will yield better long-term results for most enterprise use cases.

One of the easiest ways to improve the data quality is to rely on the latest data (real-time data) to make predictions and train models.

For the rest of this document, we will focus on using real-time data to make predictions (online model serving), namely because training models based on real-time data is still an active field of research.

MLOps lifecycle (courtesy of GCP) – Red indicates capabilities that need to be augmented for real-time AI

Across industries, DevOps and DataOps have been widely adopted as methodologies to improve quality and reduce the time to market of software engineering and data engineering initiatives. With the rapid growth in machine learning (ML) systems, similar approaches need to be developed in the context of ML engineering, which handles the unique complexities of the practical applications of ML. This is the domain of MLOps. MLOps is a set of standardized processes and technology capabilities for building, deploying, and operationalizing ML systems rapidly and reliably.]

Real-time AI requires you to augment your existing MLOps capabilities across:

- Data management – Because the data used to make predictions are now real-time, new data processing systems – namely stream processing – need to be added to enable real-time feature engineering.

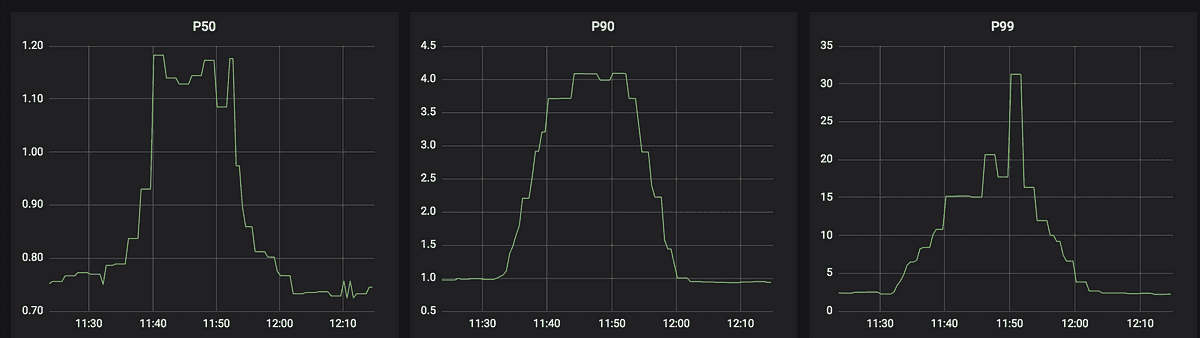

- Model serving (or prediction serving) – Because the feature data is constantly being updated, the predictions also need to be made in real-time as well. This involves augmenting your model-serving system to make predictions in real-time.

- Model monitoring – Because the predictions are very dynamic, real-time monitoring of the predictions becomes very important for the purposes of determining if the model is either stale, or if there are issues within the real-time data processing pipelines.

For each of these areas, we will do a deep-dive into the workflow. From a systems perspective, the following also needs to change (red boxes):

- Data & feature repository – must serve both batch and real-time data features

- Serving engine – instead of serving pre-calculated predictions, predictions need to be calculated and served on the fly

- Serving logs – must save data in real time, and make it available to real-time monitoring engines

- Monitoring engine – real-time processing is needed to detect both data and concept drift

- NoSQL databases have a flexible and fluid data model – this is really important for AI systems because it allows you to experiment with AI models more easily and readily

- There is no single NoSQL system that satisfies all use cases. Databases like HBase favors high-throughput query over query latency – which is essential for tasks like model training. However, tools like Apache Cassandra® favor low-latency query latency over throughput.

- AI systems do not need strong consistency models to operate effectively – which allows you to choose various NoSQL databases with higher scale, lower latency, and reduced costs.

- High-throughput & low latency writes like what’s available in Cassandra are very important for real-time data repositories.

- Fault tolerance is mandatory for Real-time AI because applications cannot afford to fail in production.

Uber Eats is a classic example of real-time AI in action. There are lots of videos of how Uber has built their own real-time AI platform using Cassandra, which is dubbed Michelangelo. We recommend watching this one.

Uber has three entities that are amenable to a real-time data flow:

- Customer data – what is in the rider’s order

- Restaurant data – how busy and how quickly can the restaurant prepare the meals

- Driver data – how busy is traffic around the customer and the restaurant

Uber stores and processes their real-time data mostly Cassandra because:

- Scalability – lots of data needs to be stored for every customer / restaurant / driver

- Low latency queries – all reads are coming off of database in Cassandra

- Fault-Tolerance – the app is not allowed to go down

The rapidly evolving world of real-time AI applications presents a unique set of challenges and opportunities for enterprise architects. This series of articles aims to provide a comprehensive guide to harnessing the power of NoSQL databases, specifically Cassandra, in the development and deployment of real-time AI-powered applications. By examining successful implementations, such as Uber Eats, and understanding the critical role of data management, model serving, and model monitoring in the MLOps lifecycle, you can gain valuable insights to help you effectively leverage Cassandra’s scalability and performance capabilities. Real-time AI is an essential component of Data-centric AI. By following the recommended resources and best practices outlined in this series, you will be well-equipped to embark on your journey toward building innovative AI systems that deliver exceptional performance and results.

In the next article, we’ll take a look at processing data in real-time and feature engineering.